RVC helps to “train” driverless vehicles how to operate under the winter conditions

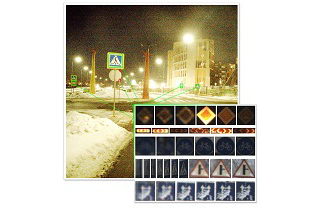

RVC has published the world's first open “winter” dataset. The unique database contains more than 600 thousand images, more than 20 thousand were marked out manually, in difficult weather conditions as well as the lidar data that enable to create a real-time 3D map of the space surrounding the car.

The database is freely available under CC BY 4.0. open license. A team of developers from the Skoltech Intelligent Space Robotics Lab collected the “winter” dataset for an international competition in the field of Ice Vision software for driverless vehicles. Its unique feature is the focus on “training” autonomous vehicles how to operate under the Russian winter conditions: in glaze ice, wet snow, low and insufficient visibility, and oncoming sunlight.

“Availability of high-quality and comprehensive datasets is one of the cornerstones for the creation of up-to-date computer vision systems. Unfortunately, the existing open datasets in the field of driverless vehicles contain relatively little data collected under the winter conditions, thereby depriving developers and researchers of the ability to test their systems in quite difficult and changeable conditions,” said Artem Pavlov, postgraduate student at Skoltech, head of Open Skoltech Car (OS:Car) Project used to collect Ice Vision dataset.

The database includes images compressed without losses that form video sequences taken with a frequency of 30 Hz on Russian roads in the winter season under various weather conditions and at a different time of the day. The video data was recorded by means of two colour cameras with a “global shutter” and 5 MP resolution. Cameras having a “global shutter” prevent distortions when shooting moving objects or at high speed, which is in particular important for visual localization and mapping algorithms.

The dataset also comprises data of laser scanning of the area by means of a three-dimensional 64-channel lidar capable of measuring 2.2 million dots per second, and data from the receivers of the satellite navigation systems. The collected data array enables to create a system that accurately determines the car location and can "see" the surrounding space in 3D format, which makes it possible for it to correctly navigate in the current traffic situation and to make correct decisions.

Besides, the database contains a manual markup made by a person, and this markup explains where and what signs are in the frame. Such markup allows creating road sign recognition systems, in particular, based on artificial neural networks. This opens the door not only to automatic semantic road-mapping but also to the development of driverless vehicles capable of driving on public highways in line with traffic regulations without a pre-built detailed map and capable of responding to temporary changes on the roads caused e.g. by road works.

“There is virtually no possibility for teams of young researchers to create their own database. The published dataset could become a good instrument for any team in order to “train” their car to “see” the signs and the traffic situation both in summer and in winter. Now it can be used by the manufacturers of driverless vehicles and developers of customized solutions for driverless vehicles”, said Mikhail Antonov, Deputy CEO – Director for Development of Innovative Infrastructure at RVC.